Fog Implementation

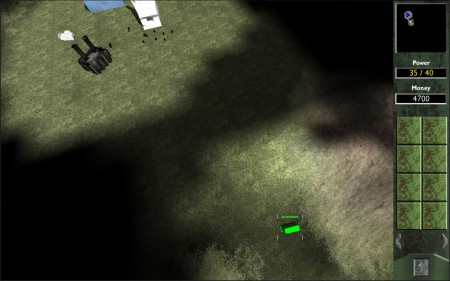

I wanted to go into some detail how the fog component in the RTS project worked, which was one of the more specialised features you don’t find in other genres. I decided to use a three-stage implementation.

- Undiscovered

- Discovered

- Revealed

The fog would begin as being completely undiscovered. As units explore, areas become revealed. Then, if a unit leaves a discovered area, instead of covering the area back up completely, the area would become only partially shrouded – only the landscape is visible and no potential hostile entities are.

The fog itself is essentially a low-resolution texture, 64 x 64 that is orthogonally projected over the entire terrain, as well as being rendered on top of the minimap texture, which means I’ve separated static (terrain) and dynamic (icons, units, other icons) draw calls

To reveal areas of the map, each unit and building sends a raycast upwards which is masked to only hit the fog’s physical layer which covers the entire map. The hit point is then converted into a texture coordinate by dividing the world position by the terrain size. Because it’s orthogonally projected, this results in a perfect representation of a pixel using the correct coordinates. This pixel and its neighbour’s colours are then set to black with no alpha, to allow it to fade in from the surrounding shroud.

The downside to this is that this is performed on the CPU entirely with the obvious exception of the projection being drawn. To somewhat solve the problem of a lot of units causing a lot of stress on the CPU from raycasts, raycasts are performed for 10% of all units per frame, which still results in a fast fog reaction. Because the fog resolution is relatively small, only 64 x 64, areas are revealed in a grid-like pattern which in this case is fine. A smooth and gradual reveal would most likely be impractical using my implementation due to the sheer number of raycasts and texture resolution of the fog layer.

One problem this has caused is that the way Unity handles projections is rather odd. As can be seen from the second image, projections are performed on a per-material basis which is causing artifacts on textures themselves. For example, the bridge has bright spots which match the area of the fog layer that is revealed. A fix for this would most likely also combat the aforementioned downside, by utilizing a pixel shader on the GPU that handles my own implementation without the use of texture projection. This however would not allow the raycasting implementation and I would most likely have to use unit positions in static buffers to feed directly into GPU memory.